TL;DR

- Product feature requests are specific suggestions from users about new capabilities, integrations, UI changes, or improvements. Distinct from general customer feedback, which is broader.

- The best collection channels: in-app widgets, feedback buttons, email surveys, sales and CS team captures, customer advisory boards, and community/public roadmap boards.

- Target who you ask, not just how you ask. Free trial users request different things than your power users. Segmenting before you send gets you better signal.

- A good intake form captures the what, the why, the workaround the user has now, and whether this is a blocker for them.

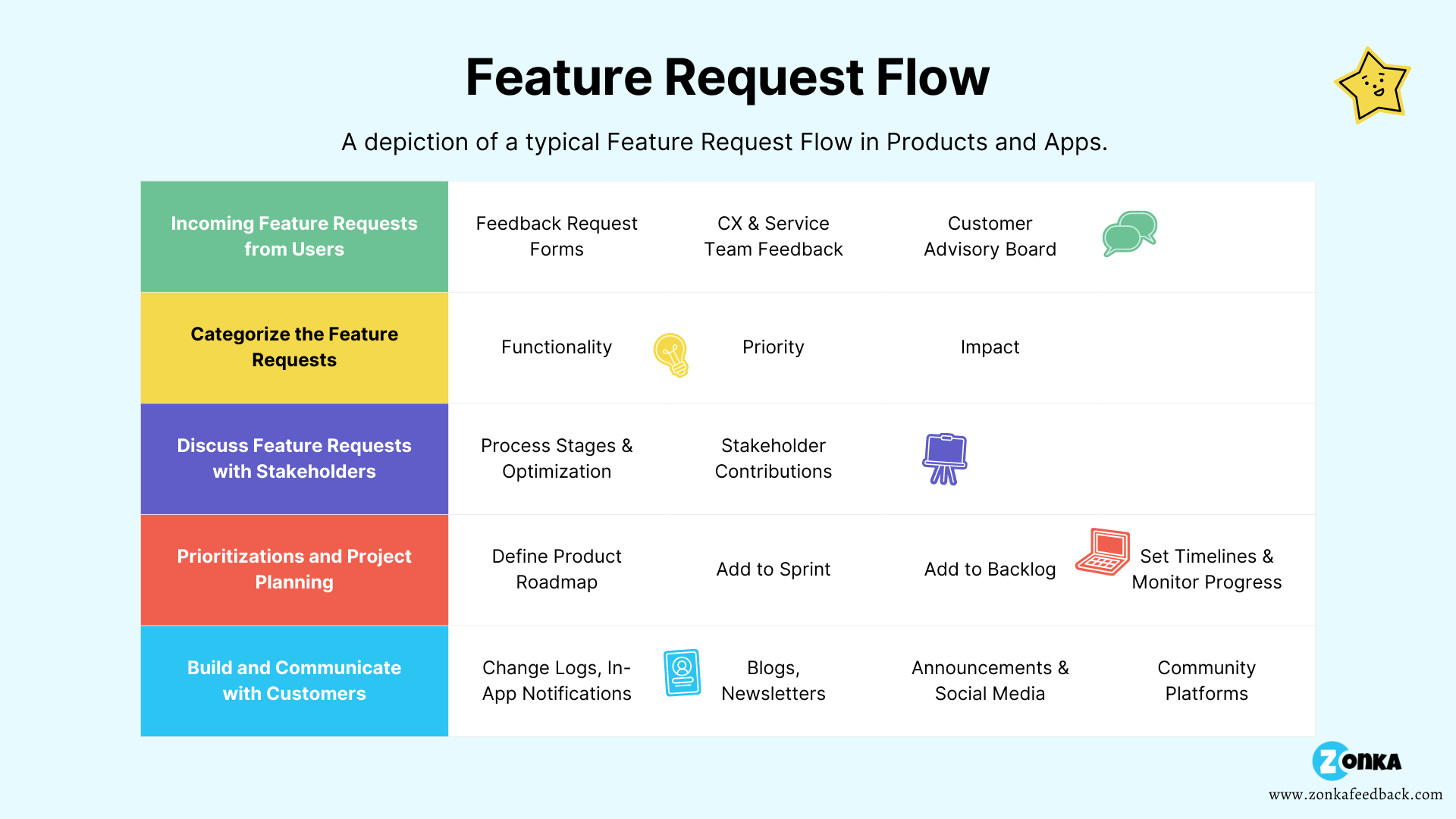

- This blog covers everything up to the moment a request lands in your system. For what happens after (prioritization frameworks, stakeholder decisions, saying no), see handling customer feature requests.

Most product teams think their feature request problem is a collection problem.

It isn't.

Requests are already arriving: through support tickets, sales calls, NPS comment fields, offhand Slack messages from the CS team, and the occasional heartfelt email at 11pm from a power user who really needs a CSV export. The collection is happening. It's just scattered across five channels that don't talk to each other, stored in different places, and visible to no one in a way that's actually useful.

That's the real problem. Not whether to collect feature requests — but whether you've built a system that makes them mean something.

This guide covers the full intake side: what to ask, where to collect, how to target the right users, and how to set up a system that doesn't fall apart the moment requests start coming in at volume. Once a request is captured and acknowledged, the next question is what to do with it. That's its own conversation.

What Are Product Feature Requests?

Product feature requests are suggestions from users about new capabilities, changes to existing features, integration support, or UI improvements, submitted to help product teams understand what customers need next.

They arrive as formal submissions, support ticket comments, sales call notes, community upvotes, and the kind of detailed email that tells you a customer has been thinking about this for weeks. They're valuable not because every one of them should be built, but because the pattern of them tells you something true about how your product is actually being used.

Feature requests typically fall into four categories:

- New features: capabilities that don't exist yet

- Feature improvements: existing capabilities that are incomplete or behaving unexpectedly

- Integration requests: connections to other tools in the customer's stack

- UI/UX changes: how something looks, where it lives, or how it behaves

Here's a distinction worth making clearly: feature requests and customer feedback aren't the same thing. Customer feedback is the broader category. It includes satisfaction scores, complaints, praise, NPS comments, and general sentiment about the product. A feature request is a specific subset: it's a customer proposing a solution to a problem they've already diagnosed. That's useful information. But the problem behind the request is often more useful than the request itself. A user asking for a CSV export might actually be telling you your reporting doesn't work for their workflow. The request is the surface. The use case is what matters.

For the broader context on how feature requests fit into your overall product feedback program, the pillar guide covers the full picture.

What to Ask in a Product Feature Request Survey

Short forms get filled out. Long ones don't.

HubSpot keeps their feature request form to three fields: subject, description, and category. That's not a limitation. That's a decision. The goal isn't to extract a product spec from a customer. It's to capture enough context to have a useful internal conversation.

Here's a question set that balances signal with simplicity:

- What feature would you like us to add or improve?

- How would you use it, and what problem does it solve for you?

- What's your current workaround?

- Have you seen this done well in another product?

- On a scale of 0–10, how important is this to your workflow?

- Would this affect whether you stay on the product?

The last two questions are the ones most teams skip. They're also the ones that turn a list of feature ideas into a prioritized signal. Knowing twelve customers want a feature is different from knowing three of them called it a potential blocker. That distinction is what makes the intake form worth having at all.

A few principles worth keeping when you build the form:

- One form, everywhere. Use the same form across in-app, email, and web. Different audiences, not different forms.

- Skip the jargon. If a question requires product-team vocabulary to answer, it's too technical for most users.

- Mix question types. Open-ended gets you the why. Rating and multiple choice gives you the data to rank.

"We have a voice of the customer program and that team's accountability is to bring both quantitative and qualitative data about customers and their experiences. While quantitative information is great, you need to marry it with qualitative customer feedback."

Yamini Rangan, CEO, HubSpot

That balance matters more than either alone. A 9/10 importance rating with a blank description tells you very little. A detailed description with no scale score is hard to prioritize against anything else. Both fields earn their place.

Zonka Feedback's survey builder lets you build a form like this in minutes. You can start from the product feature request template and adjust from there.

How Do You Collect Product Feature Requests?

The strongest programs don't rely on one channel. They make it easy for users to share requests wherever they already are, and they route everything to one place. Here's what each channel actually gives you, and where each one earns its keep.

In-App Surveys and Feedback Widgets

In-app collection has one advantage none of the other channels match: timing. When a user hits a limitation mid-workflow, the frustration and the use case are both fresh. They can describe the problem exactly as they're experiencing it, not reconstruct it from memory later.

In-app widgets also lower the submission barrier. No separate form to find, no portal to log into. Slack's in-app feature request form is a good example: short fields, embedded in the product, accessible at any point in the user journey.

Zonka's in-product feedback and in-app feedback SDK cover this channel for both web and mobile.

Feedback Button

A feedback button is a persistent, clickable element on your product or website that stays accessible as users navigate. It doesn't interrupt. It waits. When a user has something to say, it's there.

Position it somewhere consistent: a corner of the screen, the bottom of a dashboard, the sidebar. The design should fit the product, not fight it. And make sure it doesn't disappear on mobile.

Email Surveys

Email works best when it's targeted, not broadcast. Send to your most active users, or to users who've recently interacted with a specific feature. A blanket "tell us what you want" email produces volume but not signal.

Format matters too: a question embedded directly in the email body gets meaningfully more responses than a "click here to take our survey" link. Keep it to one question in the email, with an optional follow-up link for more detail.

Send a confirmation when someone submits. Not an auto-reply. An acknowledgment. More on that below.

For a comparison of in-app vs email collection, see in-app surveys.

Feedback from Sales and Customer Service Teams

Sales and CS teams are closest to customers. They hear things that would never make it into a form: frustrations that come out sideways, feature comparisons with competitors, use cases the user hasn't even articulated as a request yet.

But "gather feedback and send it back to the product team" isn't a system. It's a hope.

A system looks like this:

- A shared Jira label or Slack channel where CS and sales tag conversations as feature requests, with the customer's name, company, and one sentence on the use case

- A weekly 15-minute product-CS sync where tagged requests are reviewed

- Notes made at the moment of the conversation, not reconstructed from memory

Capture who asked and why at the point of interaction. That's the data that makes every downstream conversation faster.

Customer Advisory Boards

Customer advisory boards are the highest-signal source most teams treat as the lowest-effort one. Three sentences on a webpage, one meeting a year, and a vague agenda. That's not a CAB. That's a customer dinner with a whiteboard.

A CAB that actually produces useful feature signal looks different.

Who belongs: 6–12 customers. Mix power users (who know the product's edges), high-value accounts (whose requests carry business weight), and recently churned customers. That last group is underrated. The churned perspective tells you what the product couldn't do that mattered enough to walk away over.

Cadence: Quarterly, 60–90 minutes.

What to bring: Don't open with "what do you want?" That produces noise. Bring 3–4 specific ideas or problems and ask the group to validate or challenge them. Structured conversation surfaces more useful signal than open brainstorming.

What to do with the output: Everything surfaced in a CAB session gets logged as a formal request in your intake system, same process as an in-app submission. If it doesn't make it into your system, it doesn't exist.

One honest caveat: CABs represent your most engaged customers. Weight their input accordingly, especially for features that would serve the whole base, not just your power segment.

Community Forums and Public Roadmap Boards

Public roadmap tools like Canny, Frill, and Productboard's portal add a dimension none of the other channels give you: ranked signal at scale. Users submit ideas, upvote each other's requests, and comment on what they want changed. You see not just volume but which requests resonate beyond the person who wrote them.

Community forums (Slack communities, Discord, in-product communities) surface organic requests you'd never see via a form. Users describing problems to each other, workarounds they've built, limitations they've found. Unstructured, but the patterns are real.

Polls work best when you're validating, not discovering. If you already have three candidate features and want to know which one matters most to your users, a quick poll gives you a ranked answer. It's not a discovery tool. It's a tiebreaker.

All of it should feed into the same place. For how these channels connect to your product feedback loop, that guide covers the full cycle.

How Do You Set Up a Feature Request Collection System?

Slack, Zendesk, HubSpot. The product-led growth companies people cite as benchmarks. They don't just collect feature requests well. They've built systems where every customer-facing team feeds into the same place, every request gets a response, and product decisions trace back to real customer input. That's the bar. Not a form on a page. A system.

Here's what it takes to build one.

Step 1: Target Who You Ask, Not Just How

Not every user should see your feature request form. Free trial users often request basics that already exist. Enterprise admins want integrations. Power users want the edge cases. Showing the same form to all of them dilutes the signal from each.

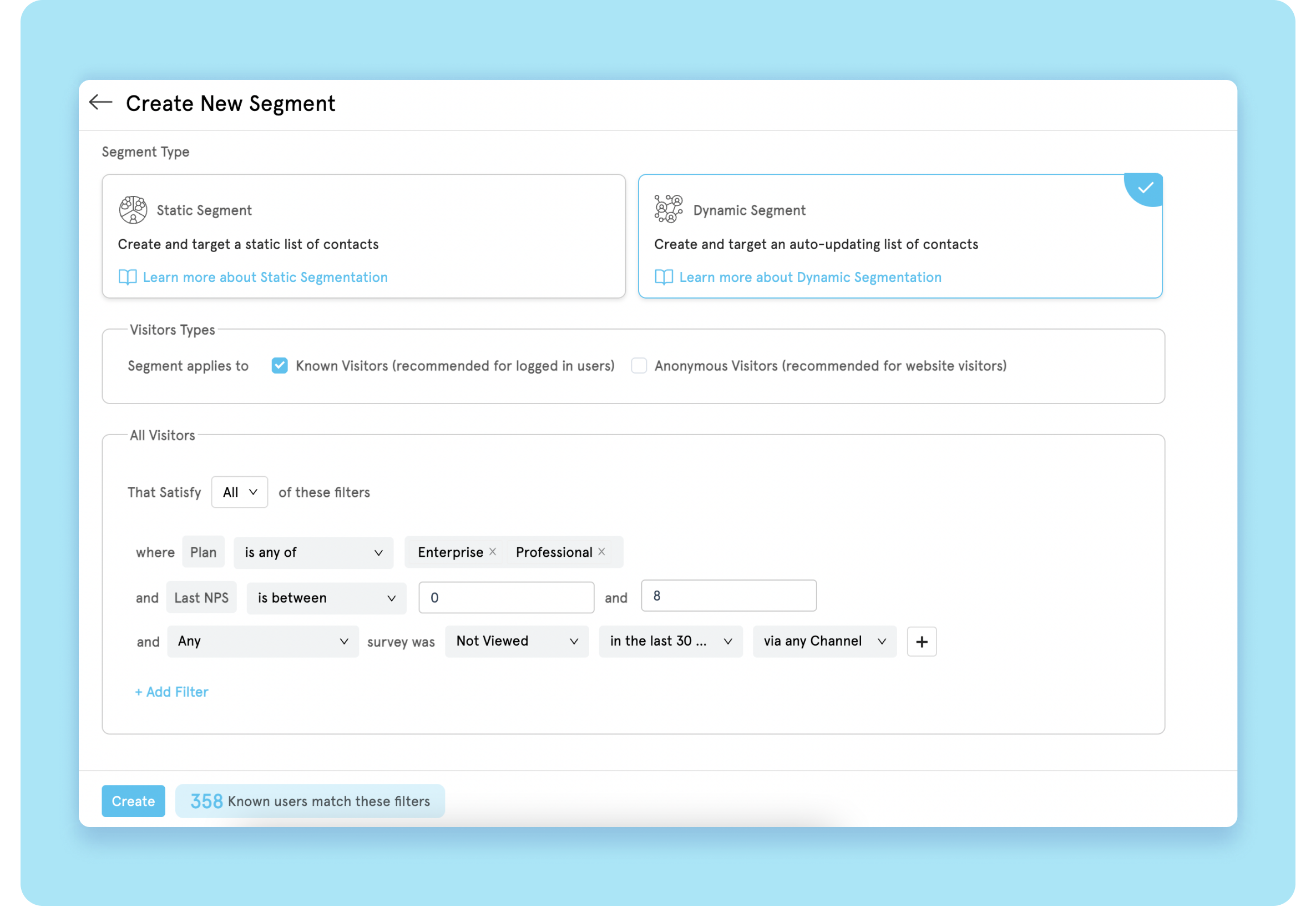

Zonka Feedback's dynamic user segments let you decide exactly who sees a feature request form and when. User segmentation can be built around:

Contact Attributes: Language, country, plan type, onboarding date, subscription tier, and more. If you want feature requests only from paid users, this is where you set that boundary.

Visit Analytics: Pages visited, number of sessions, traffic source. A user who's visited your integrations page three times this week might have something to say about your integration options.

Survey Score: Last or average NPS, CSAT, CES. A promoter who scores you 9/10 regularly will give you aspirational feature ideas. A passive who scores 7 might tell you what's missing.

Survey Interactions: Whether specific surveys were viewed, answered, or skipped. You can avoid re-prompting users who've already responded.

With segmentation, the form reaches the right users at the right moment. Without it, you're asking everyone and hearing noise.

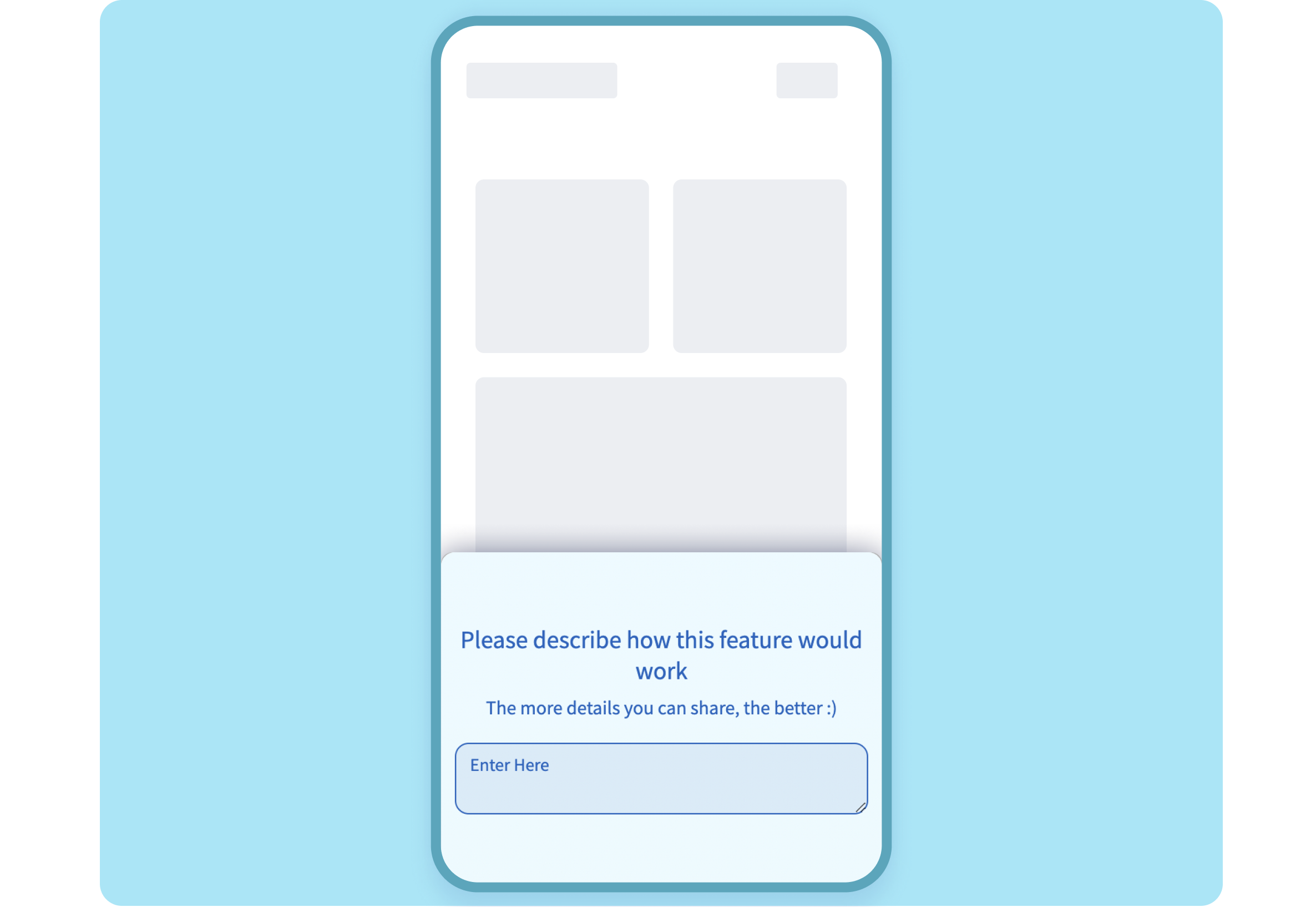

Step 2: Embed the Form Where Users Already Are

Once the form is built, add it directly to your product using a code snippet or Mobile SDK. Not a link to an external survey. A form that lives inside the product itself, accessible without leaving the workflow.

A few placement considerations:

- Fixed position so it stays visible as users navigate

- Design consistent with the product. Anything that clashes gets ignored.

- Notifications routed to the right person immediately, not in a weekly digest

The SDK handles iOS, Android, React Native, and Flutter, so the experience is native, not a web overlay.

Step 3: Route Everything to One Place

Feature requests that arrive through six channels but live in six places aren't collected. They're stored. The moment you need to find patterns, you're doing manual archaeology across Slack, email, support tickets, and a spreadsheet someone set up last year.

The real problem isn't storage. It's that the same request from five customers through five channels looks like five different problems. Centralization reveals it's one. That's what turns noise into signal.

Zonka Feedback's Response Inbox pulls all incoming feedback into one view, filterable by contact attributes, request type, date, and survey score. Integrations with tools like Jira and Google Sheets mean nothing has to be manually copied over. Requests route directly into the tools your product team already uses.

Once requests are centralized, the next phase begins. For prioritization frameworks, how to evaluate requests with your stakeholders, and how to decide what to build, that's the work of handling feature requests.

Step 4: Acknowledge Every Request Before Anything Else

Here's the fastest way to get more feature requests from your users: respond to the ones you already have.

Users stop submitting when they feel like they're talking to a void. An in-app form, a feedback button, a polished intake process: none of it matters if the person who submitted walks away wondering whether anyone read it.

Minimum viable acknowledgment: a message that confirms the request was received and is being reviewed. Automated is fine. Generic is not. "Thanks for your feedback!" is worse than nothing. It signals that no one actually read what was submitted.

Good acknowledgment sounds like: "We received your request for [feature]. Our product team reviews all submissions and considers them in roadmap planning. We'll update you if this moves forward." Specific. Honest about the process. Not a promise that it'll be built.

There's a difference between acknowledging a request and closing the loop on it. Acknowledgment is what you do at collection. Loop closure — status updates, shipping notifications, the "you asked, we built it" moment — comes later. That process is covered in closing the product feedback loop and in the handling feature requests guide.

But acknowledgment? That's your job at intake. Don't skip it.

What Comes Next

You now have the intake side covered: the right questions, the right channels, the targeting logic, and a system that doesn't fall apart at volume.

The harder part is what happens after.

Product teams that collect well but handle poorly still lose customers. A request that gets submitted, acknowledged, and then silently dropped is worse than no feedback channel at all. It made a promise. Dropping it damages the relationship.

For how to evaluate requests, prioritize them against each other, say no gracefully, and close the loop when something ships, that's the full handling customer feature requests guide.