TL;DR

- A beta testing survey collects structured feedback from real users during a pre-launch trial phase, covering usability, performance, bugs, and feature fit.

- There are five distinct survey types for beta programs: weekly pulse surveys, feature-specific surveys, bug triage surveys, final assessment surveys, and screening surveys. Each serves a different purpose and belongs at a different stage.

- Effective beta surveys are timed to specific moments in the test, not sent as a single end-of-program dump. Memory of specific friction fades in 24–48 hours.

- The 10 question categories in this guide cover every major testing dimension, with guidance on which stage each category belongs to.

- The best beta programs close the loop: testers see their feedback reflected in the product before launch.

Most product teams trust their internal QA. They run tests, log bugs, fix them — and call it done. What they miss is the gap between how engineers use a product and how real users do. That gap is exactly what beta testing surveys are built to close.

A beta survey only works if you ask the right questions at the right moment, route responses to the people who can act on them, and close the loop before launch. Most programs get one of those three right. This guide covers all of them.

What Is a Beta Testing Survey?

A beta testing survey is a structured feedback form sent to a select group of users during the trial phase of a product, feature, or major update, before the public launch. The goal is to surface usability issues, validate feature decisions, catch bugs that internal testing missed, and validate product ideas before they reach everyone.

Beta surveys differ from general product feedback collection in scope and timing. They target a controlled group during a defined window, and the questions are tied to specific product dimensions rather than overall satisfaction. A CSAT survey asks "how satisfied are you?" A beta survey asks "did the export function time out on large datasets?" The distinction matters because it changes what you do with the data. General feedback informs your roadmap over months. Beta feedback informs your fix list over days.

Most teams run beta surveys alongside other channels: bug report forms, in-app feedback widgets, tester forums. Figma took this approach with their FigJam beta, running a dedicated community forum alongside structured surveys so testers could discuss issues openly while the survey captured comparable, structured data across the full cohort. Bug reports give you individual incidents. Forums give you discussion. Surveys give you patterns across your entire tester group, and patterns are what help product managers prioritize what to fix before launch.

Beta Feedback Survey Template

Running a beta program right now? Use the beta testing survey template. It covers usability, performance, and feature feedback out of the box. For a broader product feedback version, try the beta product feedback survey template. Deploy either in-app, on your website, or via email.

Types of Beta Testing Surveys

Not all beta surveys serve the same purpose. Treating them as one category leads to bloated questionnaires that try to do everything and end up doing nothing well.

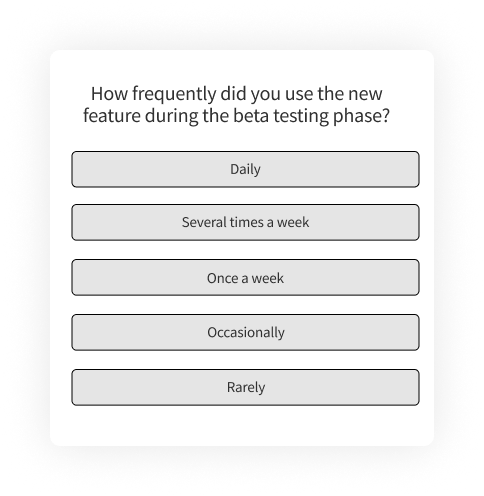

Weekly pulse surveys are short (3–5 questions), recurring check-ins that track sentiment and surface blockers over time. A single question like "What's one thing that frustrated you this week?" plus a 1–5 satisfaction rating gives you a trendline you can compare across weeks. If satisfaction dips in week three, you know something changed. These work best for betas running three or more weeks where you want to catch regression issues or measure the impact of mid-beta updates.

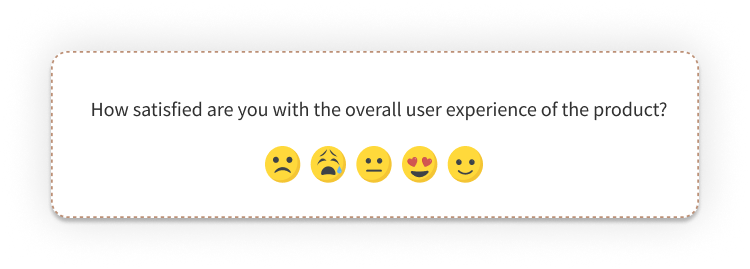

Feature-specific surveys zoom in on a single feature or workflow, sent right after testers interact with a specific capability for the first time. Atlassian does this well: during beta phases, they trigger an emoji-based NPS survey immediately after users engage with a new feature, then follow with an optional open-text prompt for detail. The targeting is what makes it useful. You're asking about a specific experience while it's still fresh, not asking testers to reconstruct it two weeks later.

Bug triage surveys sit between a traditional bug report form and a satisfaction survey. They include structured fields (what happened, steps to reproduce, device and OS, severity rating) paired with experience context (how much did this disrupt your workflow? would this prevent you from using the product?). These help engineering teams prioritize by business impact, not just technical severity. A bug that crashes the app on an edge case matters less than one that blocks the primary workflow for 60% of testers.

Final assessment surveys go out in the last week of the beta. They capture the big-picture view: overall satisfaction, likelihood to recommend, competitive comparison, and the single most important improvement. This is where NPS belongs in a beta context. NPS measures relationship loyalty, and by the end of a beta, testers have had enough exposure to form a genuine opinion. Asking NPS after day two of a beta gives you noise. Asking it after three weeks of real use gives you signal.

Screening surveys happen before the beta starts. They qualify testers by demographics, technical setup, use-case fit, and experience level. A well-screened beta cohort produces better feedback than a large but random one because the testers match your actual target market. If 30% of your testers don't match your target user profile, your aggregated survey scores reflect a market you're not selling to. A screening survey that filters for role, tech stack, and use-case alignment prevents that distortion from contaminating every survey that follows.

The best beta programs combine two or three of these types across the testing window, each feeding into your broader product feedback guide for the product. A typical structure: screening survey at recruitment, weekly pulse during the beta, one or two feature-specific surveys triggered by releases, and a final assessment at close.

When to Run a Beta Survey (And When Not To)

Timing is the single biggest factor in beta survey quality, and the one most teams get wrong.

The default approach is to send one big survey at the end of the beta. By day 21, testers have forgotten the friction they hit on day three. Research on episodic memory shows that specific event recall degrades sharply after 24–48 hours and shifts to generalized narrative after five to seven days. A tester who hit a confusing modal on Tuesday can describe it precisely on Tuesday evening. By Friday, they'll say "the UX was a bit confusing" — which tells your design team nothing actionable.

Here's a framework that works better, organized by beta phase.

Post-onboarding (Days 1–3). Send a short survey (4–6 questions) focused entirely on setup, first impressions, and initial navigation. This is when friction is fresh. If your installation process is confusing or your UI doesn't make sense on first contact, this is the only window where testers will tell you with specificity. Wait a week and they'll either have figured it out (and forgotten the pain) or quietly stopped using the product. Slack's beta phase is a useful case study here. The team used early tester feedback on onboarding to refine how new users were introduced to channels and threading, which directly shaped the product's first-run experience.

Mid-beta (Weeks 2–3). The meatiest survey window. Testers have used the product enough to have real opinions about features, performance, and workflow fit. Ask about feature functionality, stability, and integration with their existing tools. Keep it to 8–12 questions. When we helped LivingPackets, the smart packaging company, roll out their product beyond internal testing, their CX Manager Amal Hamid described the approach: "We started sending surveys to users when we noticed they were struggling with the product. Zonka Feedback helped us quickly identify where the process was blocked and improve the user experience." The key detail: surveys were triggered by observed user behavior, not a calendar date. That's the difference between feedback that pinpoints a problem and feedback that vaguely gestures at one.

Centercode's research on beta participation found that average active participation rates sit around 30% for unmanaged programs. Longer surveys at this stage push that number lower.

Pre-launch (Final week). A focused wrap-up: overall experience, likelihood to recommend, and the single most important improvement. Keep it short (5–7 questions) and include an open-text field for anything testers haven't had a chance to say. This is also the right moment for competitive comparison questions because testers have now used your product enough to benchmark it against alternatives.

When NOT to survey. Don't survey after a major bug that blocked testers from using the product. Fix the bug first, let testers re-engage, then survey. Sending a "how was your experience?" survey when the experience was "it crashed and I couldn't log back in" gets you data that reflects the bug, not the product. You already know about the bug.

And don't survey testers who haven't actually used the product. If your analytics show a tester logged in once and never returned, a survey won't tell you why. A direct message will. Google Glass's beta program is a cautionary tale here. The limited scope of user feedback during testing meant broader usability and social acceptance concerns went unaddressed, contributing to a product that struggled to find market fit after launch.

Which Tools Are Best for Running Beta Testing Surveys?

Not every survey tool is built for beta testing. You need something that can collect feedback in context: inside the product, in real time, from users who are actually putting it through its paces.

How we evaluated these tools:

We reviewed these tools based on hands-on research, official documentation, G2 reviews, and real feature behavior during beta testing workflows. We're the team behind Zonka Feedback, so we're upfront about that. Tools aren't ranked. We aimed for a research-driven evaluation that helps you pick the right fit for your beta program.

The criteria that matter most for beta survey tools: survey customization (branching, logic, question types), distribution channels (in-app, email, web), integration with bug tracking and project management tools, real-time alerting for critical feedback, and analytics depth.

1. Zonka Feedback: Best for In-App Beta Surveys with AI-Powered Analysis

Zonka Feedback captures beta feedback in the product — triggered at onboarding, after feature interactions, or at known friction points, not through a survey sent days later. The AI layer groups open-text responses by theme, so a program with 300 tester comments doesn't require 300 reads. We helped LivingPackets set this up when they rolled out their smart packaging product beyond internal testing, triggering surveys based on user interactions and routing responses into their task management tools (CRISP for chat, ClickUp for issue tracking) so feedback reached the right team without manual triage.

Key Features

- Multi-channel collection across in-app, email surveys, website surveys, mobile, and offline channels from one platform

- Ready-to-use survey templates for beta feature feedback, usability testing, and performance checks

- Real-time alerts when specific thresholds are hit (low satisfaction score, negative keyword flagged)

- AI-powered thematic analysis that groups open-text responses by topic (no manual tagging)

- Integrations with Jira, Slack, HubSpot, and Salesforce so bugs go directly into your workflow

Zonka Feedback Pros

- In-app survey triggers mean you catch feedback at the moment of friction, not 24 hours later

- AI analysis handles large response volumes without manual work

- Multi-channel collection from one platform: web, in-app, email, and SMS

Zonka Feedback Cons

- Advanced AI features are on higher-tier plans, not the entry level

- No native video feedback capture — pair with a screen recording tool if qualitative session data is needed

Zonka Feedback Pricing

- Custom pricing available based on business requirements

- Free trial for paid features available for 14 days

2. UserTesting: Best for Video-Based Beta Tester Sessions

UserTesting is an enterprise-level platform built for experience research through recorded sessions: video, audio, and written feedback from actual users completing tasks. For beta testing, it's useful when you need to watch how someone navigates a new feature, not just read what they thought of it afterward. Where survey data tells you what testers think, session recordings show what they actually do, most relevant for testing complex workflows or first-time product interactions.

Key Features

- Targeted tester recruitment filtered by demographics, job role, industry, or technical experience

- Recorded sessions capturing screen activity, facial reactions, and verbal narration simultaneously

- Custom task-based test scenarios you can configure to match specific beta workflows

- Highlight reel clips that let you pull key moments from sessions and share with your team

- Integrations with Jira, Slack, and other project management tools for finding and logging issues

UserTesting Pros

- Seeing a user's actual behavior closes the gap between what people say and what they do

- Strong recruitment capabilities: useful for teams that don't already have a beta user pool

UserTesting Cons

- High cost: enterprise pricing puts it out of reach for smaller teams

- Video-based sessions take longer to analyze than structured survey responses; no automated theme detection

- Better as a supplement to a survey tool than a replacement for one

UserTesting Pricing

- Custom. Contact their team for quotes.

3. Centercode: Best for Structured Beta Program Management

Centercode handles the full beta lifecycle: recruitment, participant management, feedback collection, bug tracking, and reporting, all from one platform. It's more program management tool than survey tool, but the feedback collection layer is built in. Their managed programs report participation rates above 90%, compared to the 30% industry average for unmanaged betas. That gap makes a strong case for purpose-built infrastructure when your program runs 50+ testers across multiple release waves.

Key Features

- End-to-end beta program management: recruitment, onboarding, engagement scoring, and reporting in one platform

- Built-in survey templates for weekly pulse check-ins and final assessment at program close

- Automated tester engagement scoring that flags at-risk participants before they go silent

- Native Jira sync routes bug reports and feature requests directly into your engineering backlog

- Participant portal gives testers a single place to submit feedback, log bugs, and track progress

Centercode Pros

- Strong structure for teams running beta programs with dozens or hundreds of testers

- Bug tracking and feedback live in one system: no copy-paste between tools

Centercode Cons

- Steeper learning curve than standalone survey tools; overkill if you just need a survey

- G2 reviewers note the platform takes time to configure properly

Centercode Pricing

- Beta version: free for up to 50 testers and 1 project

- Delta subscription: starts at $39/user/month

- Company-wide plans: pricing on request

More Tools Worth Knowing

- Luciq (formerly Instabug): Captures user opinions and feature requests via in-app surveys, with real-time alerts for critical performance issues during beta phases. Strong on mobile app testing specifically.

- Marker.io: Built for bug reporting. Testers annotate screenshots directly, and the reports sync with project management tools like Jira or Linear. Good complement to a survey tool when you need both feedback and reproducible bug documentation.

- UXCam: Offers session replays, heatmaps, and user journey analytics, useful for understanding what testers do when they're not filling out a survey. No native survey builder. Pairs well with structured survey data.

- SurveySparrow: Collects contextual feedback via templates with a conversational survey format. Integrates with HubSpot and other tools, and supports comparative analysis across testing rounds.

What Should Your Beta Testing Survey Actually Ask?

This is where most beta surveys go wrong. Teams build one long survey, send it at the end of the testing period, and get responses that are either too vague ("it was fine") or too late to act on.

The 10 categories below cover every meaningful dimension of a beta test. For general product feedback collection beyond beta, see our product survey questions guide. Each category includes guidance on which testing stage it belongs to, so you're asking the right questions at the right moment, not bundling everything into a single form nobody finishes.

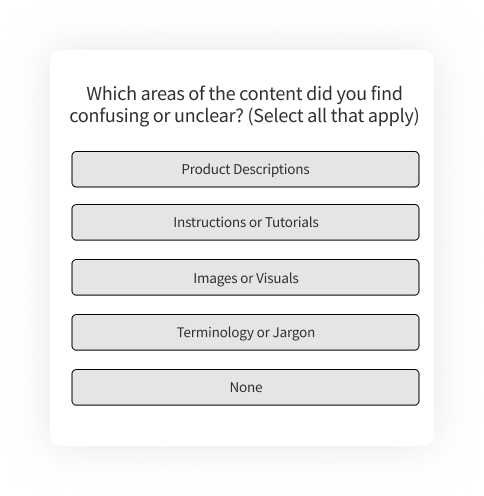

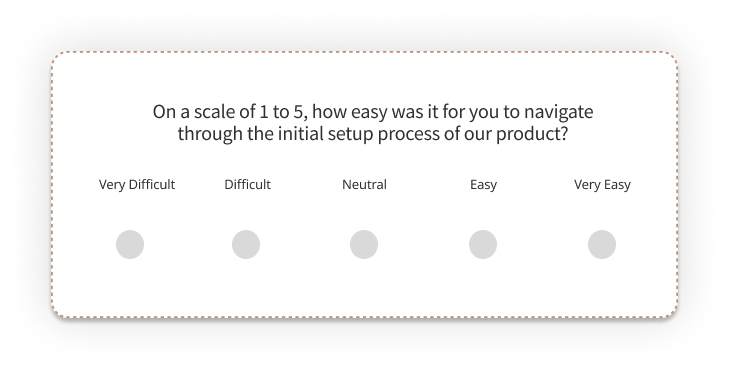

1. Usability and User Experience

When to use: Post-onboarding survey and mid-beta survey. Usability issues surface early and should be caught before testers develop workarounds that mask the problem. These questions connect directly to product-led growth with customer feedback: the easier your product is to use during beta, the stronger your adoption signal at launch. For broader post-launch measurement, see our guide on user experience surveys.

- How easy was it to navigate the product?

- Did you encounter any difficulties using the product?

- Did you find the layout and design of the product visually appealing?

- How does the product compare to similar products in the market?

- Did you encounter any difficulties in finding the information or options you needed?

- Were there any specific features or functionalities that were challenging to use?

- What suggestions do you have for improving the product to provide a better overall experience?

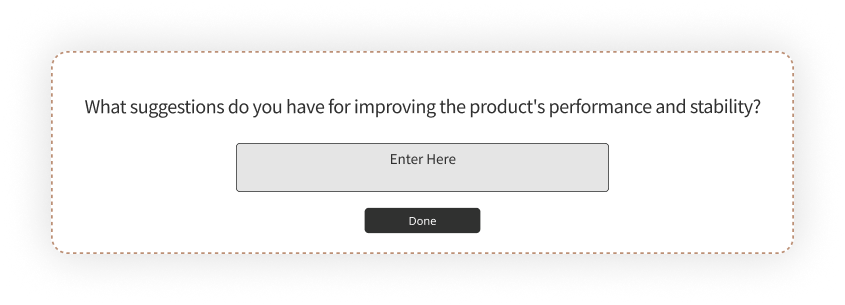

2. Performance and Stability

When to use: Mid-beta survey, after testers have had enough usage time to encounter performance issues under real conditions. Don't ask these on day one unless you're testing infrastructure specifically. Engineering teams should receive these responses directly through a Jira or Slack integration so they can triage without waiting for a summary report.

- Did you experience any errors or crashes while using the product?

- Were there any delays or lags in product response time?

- Did you encounter any issues with loading or refreshing pages?

- Were there any noticeable performance issues when executing specific tasks or functions?

- Did the product consume an excessive amount of system resources (e.g., CPU, memory)?

- Were there any compatibility issues with your operating system or other software?

For tracking bug report form questions alongside performance feedback, consider running a separate structured bug form in parallel with this question category.

3. Feature Functionality and Effectiveness

When to use: Mid-beta survey. Testers need enough time to actually use features before you ask about them. Pair these questions with collecting product feature requests to connect survey data with specific product decisions. When testers identify a missing capability, that response should flow into your feature request handling process, not an unread spreadsheet.

- Did you find the product's features helpful and easy to use?

- How would you rate the performance and responsiveness of the feature during your testing?

- Did you encounter any errors, bugs, or unexpected behavior while using the feature?

- Were there any specific aspects or options within the feature that were confusing or unclear?

- How could the product's features be improved to better meet your needs?

4. New Feature Feedback

When to use: Immediately after a new feature is enabled during the beta. Don't wait for the end-of-beta survey. Testers have the sharpest opinions right after trying something new. This is also where product idea validation with feedback happens in practice: your beta testers are telling you whether the feature you built matches the need you identified.

- What specific tasks or actions did you use the new feature for?

- How would you rate the usability and intuitiveness of the new feature?

- Were there any aspects or options within the new feature that were confusing or difficult to understand?

- Did you encounter any errors, bugs, or unexpected behavior while using the new feature?

- Based on your experience with the new feature, what suggestions or improvements would you recommend?

5. Integration with Existing Systems

When to use: Mid-beta or later, once testers have attempted to connect the product with their existing tools. This is especially relevant for SaaS products that live within a larger tech stack.

Integration friction is one of the top reasons beta testers give up on a product without saying anything. It creates silent churn before launch. Most teams skip this category in beta surveys because they assume integration testing is a separate QA workstream. It is. But the survey captures something QA doesn't: whether the integration experience felt easy or frustrating from the user's perspective. That's a different question from whether it technically works.

- Did you encounter any issues while integrating the product with your existing system?

- How easy was the integration process?

- Did you require any additional support or resources to integrate the product?

- Did the integration of our product with your existing system or software impact the overall performance or stability of your system?

- Which integrations would you need before considering this product for production use?

6. Content Clarity and Effectiveness

When to use: Post-onboarding and mid-beta. Content issues (unclear instructions, confusing error messages, missing help documentation) are often invisible to internal teams because they already know the product. Beta testers see it fresh. This overlaps with content experience feedback but scoped specifically to the beta context.

- Was the in-product content (labels, instructions, tooltips) easy to understand?

- Did the content provide the information you needed to complete tasks independently?

- Did the content align with your expectations for the product?

- What suggestions do you have for improving the clarity and effectiveness of the product's content?

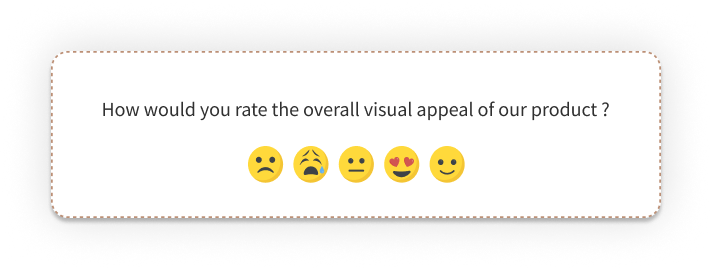

7. Visual Design and Branding

When to use: Post-onboarding survey, when first impressions are strongest. Also useful mid-beta if you've pushed UI changes during the testing period.

Visual feedback at the beta stage is worth collecting. Not to let testers redesign your product, but to flag jarring inconsistencies, brand misalignments, or elements that confuse instead of guide.

- How visually appealing do you find the overall design of our product?

- Did the product's visual design and branding align with your expectations?

- Did the color scheme and typography used in the product enhance or detract from the user experience?

- Did the logo effectively convey the product's brand identity?

- Were there any specific elements of the visual design that stood out to you positively or negatively?

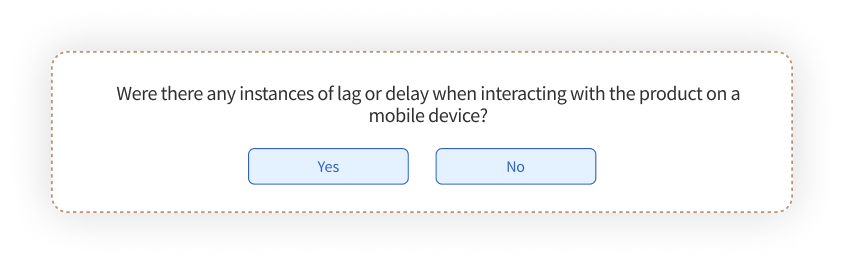

8. Mobile Responsiveness

When to use: Any survey where a meaningful portion of your testers access the product on mobile devices. Mobile responsiveness issues are often device-specific, so include a "which device?" qualifier.

Apps built and tested on desktop often break the moment a user switches devices. And for products where mobile is the primary use case, a broken mobile experience doesn't get discovered in QA. It gets discovered in reviews. For teams building native mobile apps, in-app beta surveys deployed through a mobile SDK capture feedback without pulling testers out of the testing flow.

- How responsive was the product on your mobile device?

- Did you encounter any issues while using the product on your mobile device?

- Did the product's layout and design adapt well to different screen sizes?

- How would you rate the overall mobile experience of the product compared to the desktop version?

- Based on your experience, what suggestions or improvements would you recommend to enhance the product's mobile responsiveness?

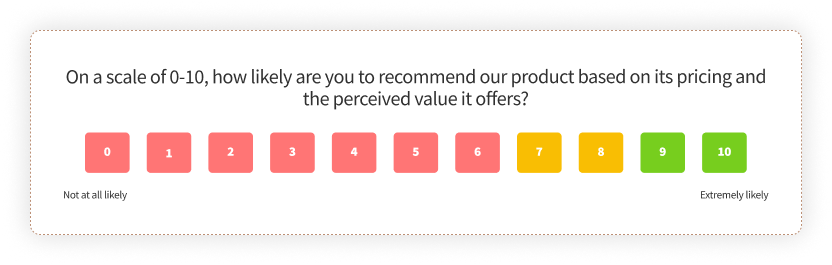

9. Pricing and Value Perception

When to use: Final survey only. Testers need full exposure to the product before they can assess value. Asking about pricing too early produces answers based on expectations, not experience.

This is the question category most beta surveys skip. It's also the one that tells you whether the product will sell. Testers who've actually used it have the clearest read on whether the value matches the price. These questions also support product-market fit survey assessment: if 40%+ of your testers say they'd be "very disappointed" without the product, you're in a strong position regardless of where pricing lands.

- Do you feel that the product is priced appropriately for its features and functionality?

- How does the price of our product compare to similar solutions in the market?

- Do you feel that the product provides good value for its price?

- Are there any features or functionality that you feel are missing or should be included at the current price point?

- Did the pricing structure or model of the product provide flexibility and options that meet your needs?

- How likely are you to purchase the product at its current price point?

10. Product Onboarding

When to use: Post-onboarding survey, within 24–48 hours of the tester's first session. This is the most time-sensitive dimension. Onboarding questions are the most skipped and the most important. If testers struggle to get started, they'll never reach the features you actually want feedback on. Memory of onboarding friction fades fast. This is the only window where you'll get specific, actionable answers.

- How would you rate the ease of getting started with the product?

- Did you find the onboarding instructions clear and easy to follow?

- Were there any steps or aspects of the onboarding process that were confusing or unclear?

- Did the product provide sufficient guidance and support during the onboarding process?

- Did the product effectively communicate its key features and benefits during the onboarding process?

- Based on your experience with the onboarding process, what suggestions or improvements would you recommend to enhance the overall onboarding experience?

Which Beta Testing Questions Should You Use When?

You don't send all 10 categories at once. A 50-question survey at the end of a beta period gets skimmed, skipped, or abandoned. Here's the quick-reference map.

Early access (Days 1–3): Onboarding (#10) and usability (#1). You want to know if testers can get started and navigate without friction, before they've adapted to workarounds.

Active testing (Weeks 2–3): Performance (#2), feature functionality (#3), new feature feedback (#4), and integration (#5). These require enough product experience to answer meaningfully.

Pre-launch (Final week): Content clarity (#6), visual design (#7), mobile responsiveness (#8), and pricing perception (#9). These are the questions where tester responses most directly mirror what new customers will experience at launch.

Running in-app surveys lets you trigger each category at exactly the right moment rather than bundling everything into a single end-of-program survey.

How to Analyze Beta Survey Results

Collecting responses is the easy part. Turning them into a prioritized fix list before launch is where most beta programs stall.

Group quantitative data by dimension, not by question. Instead of looking at Q1, Q2, Q3 scores in isolation, aggregate all usability questions into a usability score, all performance questions into a performance score, and so on. Individual question scores are noisy: they're affected by question wording, placement in the survey, and tester fatigue (questions late in the form get lower engagement regardless of content). Aggregating across a dimension averages out that noise. A product that scores 4.5/5 on features but 2.1/5 on stability has a clear priority: fix the crashes before you polish the features.

Cluster open-text responses by theme, not by question. Testers don't neatly organize their feedback by your survey structure. A response to "what would you improve?" might describe a bug. A response to "any other comments?" might contain a feature request. Read all open-text responses together and tag them by theme: navigation confusion, performance under load, missing documentation, specific feature gaps. If you're handling 100+ responses, AI-powered sentiment analysis tools do this clustering in minutes instead of hours.

Prioritize using a severity × frequency matrix. Not all issues are equal. A critical bug that 3% of testers hit is less urgent than a moderate usability issue that 70% of testers hit. Plot issues on two axes: how bad is it when it happens (severity) and how many testers experienced it (frequency). The top-right quadrant (high severity, high frequency) is your pre-launch must-fix list. The bottom-left quadrant can wait for post-launch iteration.

One pattern worth knowing: the matrix almost always surprises teams. The issues engineering assumes are critical often land in the low-frequency quadrant, while the issues product managers initially dismissed as "minor UX polish" turn out to be high-frequency blockers that the majority of testers hit. Let the data reorder your assumptions.

Compare across survey waves. If you ran weekly pulse surveys, compare satisfaction scores across weeks. A downward trend means something regressed. An upward trend after a mid-beta fix confirms you solved the right problem. Trend data is more useful than snapshot data because it tells you the direction, not just the position.

Watch for silent testers. Survey results only represent testers who responded. If 40 people received the survey and 15 responded, the other 25 may have disengaged entirely. Cross-reference survey response rates with product usage data. Testers who stopped using the product and stopped responding to surveys are your highest-risk signal. A direct message asking what went wrong often surfaces the most critical issues your survey missed.

How Do You Get the Most Out of a Beta Testing Survey?

Good collection mechanics matter. But most programs that produce weak beta feedback don't have a tools problem. They have a design and process problem. Here's what actually makes a difference.

Set clear goals before you build the survey. Know what decision each survey is informing before you write a single question. Is this round testing whether onboarding works? Whether testers understand the pricing model? Whether the mobile layout holds up? One survey, one primary goal. Everything else is secondary. When you're clear on this upfront, you write tighter questions and get responses you can actually use.

Encourage honest feedback: including the negative kind. Testers default to politeness, especially when they know the product team is reading their responses. Build for honesty by making the survey anonymous where possible, using neutral question framing, and explicitly telling testers you want to know what isn't working. An open-text box at the end with "What would you change about this?" gets you further than a rating scale alone. Some of the most useful product feedback examples come from exactly this kind of unstructured question.

Cap survey length and respect tester time. Survey fatigue in beta programs is real. Keep mid-beta surveys to 10–12 questions, post-onboarding and wrap-up surveys to 5–7. Completion rates drop 5–10% for every additional minute past the five-minute mark. If you have more ground to cover, split into two surveys a week apart rather than sending one long form. Centercode's data shows that managed programs with focused surveys see participation rates above 90%, while unmanaged programs averaging broader surveys sit around 30%.

Use multiple feedback channels. Your testers aren't all checking email during a beta. Run surveys across the channels where they actually are: in-app feedback tools, email, and mobile survey apps for testing in the field. Multi-channel collection isn't about blasting more surveys. It's about catching feedback at the right moment in the right format.

Route responses to the right team automatically. Usability feedback should hit the design team's Slack channel. Bug reports should create Jira tickets. Feature requests should land in the product backlog. If all survey responses go into the same spreadsheet and someone manually triages them later, "later" often means "never." Build the routing before you launch the survey. Your internal product feedback process should define who owns which category of beta feedback before the first survey goes out.

Keep testers in the loop. This is where most programs lose momentum. Testers give feedback and then hear nothing. They don't know if it was read, whether it mattered, or whether anything changed. A weekly update (even a short message summarizing what feedback came in and what the team is doing about it) keeps engagement high through the full program. Teams that closing the product feedback loop with testers consistently see higher response rates on subsequent surveys.

Analyze and act before the beta ends. Some teams collect beta feedback and then spend weeks trying to make sense of hundreds of open-text responses. That delay is where insights go to die. Use a tool with AI analysis to group responses by theme as they come in, so by the time the beta period ends, you already know the top five issues to fix. The product feedback loop only works when the time between "tester said this" and "team acted on this" is short.

What Separates Good Beta Programs from Noise

The survey is the mechanism. What matters is whether testers' answers reach the people who build the product, in time to change it.

The best beta programs don't treat surveys as a data collection exercise. They treat them as a conversation with 40 or 100 or 500 people who are using the product for real, right now, and who are telling you exactly where it breaks. We saw this with LivingPackets. Surveys triggered by user behavior, not a calendar date. Responses routed automatically to the right team. Fixes pushed during the rollout. Users who could see their feedback reflected in the product before it shipped widely.

That's the difference between a beta that validates and a beta that decorates.

Your next beta survey doesn't need 30 questions. It needs the right 10, sent at the right moment, routed to the right team, with a visible loop back to the testers who took the time to respond.